Featured Posts

6 Christian Comedians to Watch in 2024

Laugh out loud with the best Christian comedians! Experience humor that's both wholesome and hilarious.

By Angel Studios | March 28, 2024

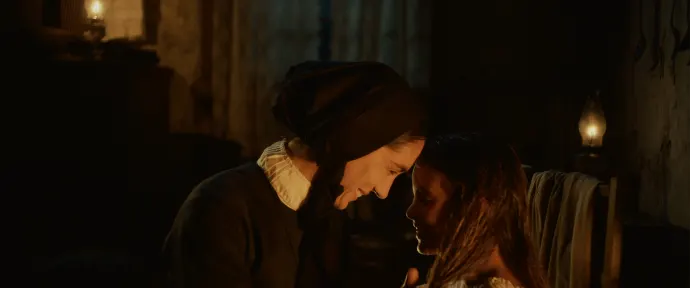

Updates on David Helling's Upcoming Biblical Epic: Jacob

Just as His Only Son touched the hearts of Angel Guild members and moviegoers worldwide, Jacob will pick up right where that story leaves off.

By Angel Studios | March 26, 2024